DarkReading

FCC Reveals 'Royal Tiger' Robocall Campaign

In a first-ever move, the commission's enforcement bureau has high hopes that official classification will allow law enforcement partners to better combat these kinds of threats.

DarkReading

In a first-ever move, the commission's enforcement bureau has high hopes that official classification will allow law enforcement partners to better combat these kinds of threats.

SecurityWeek

Palo Alto Networks and IBM have announced a significant partnership to jointly provide cybersecurity solutions.

CyberNews

A Russia-linked group is automating fake news fabrication and publishing with AI.

.png)

Cyber Security News

Staying informed is the key in this dynamic battle of cybersecurity, and due to this, the weekly news recap provides you with the newest trends, weaknesses, infringements found, and some possible defense mechanisms.

HACKRead

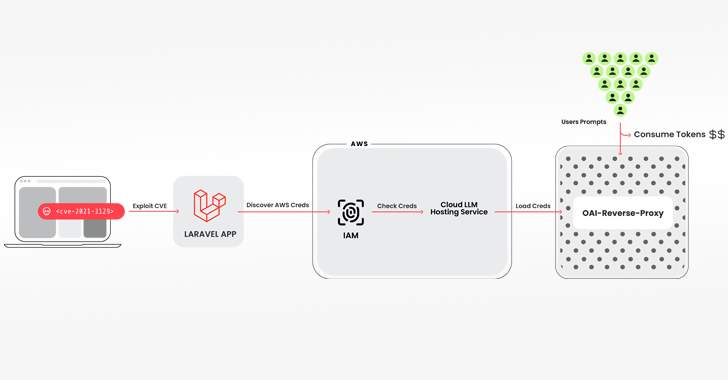

Researchers have discovered a novel cyberattack scheme, dubbed, LLMjacking, in which, threat actors gain access to the cloud environment.

SecurityWeek

Hundreds of companies are showcasing their products and services this week at the 2024 edition of the RSA Conference in San Francisco.

The Hacker News

Researchers have uncovered a new attack called "LLMjacking" that targets large language models (LLMs) hosted on cloud services.

Infosecurity News

Sysdig said the attackers gained access to these credentials from a vulnerable version of Laravel

SecurityWeek

Despite the current lack of large-scale criminal exploitation of gen-AI, researchers highlight indications that this may change.

CyberNews

Amazon has announced the preview launch of Amazon Bedrock Studio, which allows developers to build generative artificial intelligence applications.

Bleeping Computer

OpenAI and Stack Overflow recently teamed up to improve AI models. OpenAI will have access to Stack Overflow's API and feedback from developers. In return, OpenAI will link to Stack Overflow's content in ChatGPT.

Infosecurity News

An ISACA survey found that just a third of organizations are adequately addressing security, privacy and ethical risks with AI

DarkReading

While attackers have targeted AI systems, failures in AI design and implementation are far more likely to cause headaches, so companies need to prepare.

SecurityWeek

Hundreds of companies are showcasing their products and services this week at the 2024 edition of the RSA Conference in San Francisco.

Security Magazine

A new report reveals that a majority of data experts agree that artificial intelligence is increasing data security challenges.

SC Magazine

Large language models (LLM) provide context that could expose overlooked threats.

DarkReading

Large language models promise to enhance secure software development life cycles, but there are unintended risks as well, CISO warns at RSAC.

SC Magazine

Security pros advise teams to use demo apps such as ChatRTX mainly in a test environment.

Ars Technica

Andrej Karpathy muses about sending a LLM binary that could "wake up" and answer questions.

SecurityWeek

Horizon3.ai's AISaaS-based, AI-assisted penetration service allows proactive defensive action against exploitation of new vulnerabilities.

CSO

Tools, platforms, and services that the CSO team recommends 2024 RSA Conference attendees check out.

SecurityWeek

DeepKeep, which provides an AI-Native Trust, Risk, and Security Management (TRiSM) platform, has raised $10 million in seed funding.

SC Magazine

Vulnerability exploits, pure extortion and internal risks are on the rise, while AI threats fall short.

Ars Technica

Mystery LLM highlights transparency issues in AI testing.

CSO

The new offering is aimed at protecting against prompt injection, data leakage, and training data poisoning in LLM systems.

The Cyber Express

A complaint lodged by privacy advocacy group Noyb with the Austrian data protection authority (DSB) alleged that ChatGPT's generation of

Ars Technica

CEO-heavy board to tackle elusive AI safety concept and apply it to US infrastructure.

Infosecurity News

European non-profit Noyb has filed a complaint to the Austrian data protection authority (DSB) over OpenAI’s ChatGPT providing false personal information

Cyber Security News

Welcome to this week's edition of the Cyber Security News Weekly Round-Up. This issue covers the latest vulnerabilities, cyber attacks, and emerging threats that have been making headlines. Stay informed and stay secure!

DarkReading

Our collection of the most relevant reporting and industry perspectives for those guiding cybersecurity strategies and focused on SecOps. Also included: security license mandates; a move to four-day remediation requirements; lessons on OWASP for LLMs.

Ars Technica

Microsoft’s 3.8B parameter Phi-3 may rival GPT-3.5, signaling a new era of “small language models."

CyberNews

Large language models (LLMs) such as GPT-4 can exploit one-day vulnerabilities, researchers find.

Cyber Security News

Large language models (LLMs) have achieved superhuman performance on many benchmarks, leading to a surge of interest in LLM agents capable

The Hacker News

North Korea's state-linked hackers are enhancing their operations with advanced artificial intelligence tools.

CyberNews

“We believe these are the best open source models of their class, period,” Meta said.

Ars Technica

Zuckerberg says new AI model "was still learning" when Meta stopped training.

DarkReading

Existing AI technology can allow hackers to automate exploits for public vulnerabilities in minutes flat. Very soon, diligent patching will no longer be optional.

SecurityWeek

Inside the four pillars of an offensive playbook – Red Teams, Penetration Testing, Automation and AI, and vulnerability assessment.

CyberNews

Google has trained a large language model to provide natural language explanations of its hidden representations.

SC Magazine

The “Crescendo” attack uses a chain of seemingly benign prompts to achieve an adverse output.

Ars Technica

Gemini 1.5 Pro launch, new version of GPT-4 Turbo, new Mistral model, and more.

DarkReading

Our collection of the most relevant reporting and industry perspectives for those guiding cybersecurity strategies and focused on SecOps. Also included: facing hard truths in software security, and the latest guidance from NSA.

CyberNews

Microsoft says that it’s developed novel techniques to fight against two attacks that malicious actors use to jailbreak AI systems.

Security Affairs

TA547 group is targeting dozens of German organizations with an information stealer called Rhadamanthys, Proofpoint warns.

SC Magazine

A PowerShell script used to deploy the infostealer contains unusually specific comments, researchers say.

SecurityWeek

With $10 million in funding, cybersecurity startup Simbian is building a fully autonomous security platform using AI.

SecurityWeek

Startup Knostic emerges from stealth mode with $3.3 million in funding and a gen-AI access control product for enterprises.

CyberNews

Cybercriminals impersonating legitimate German companies are attacking organizations in the country.

DarkReading

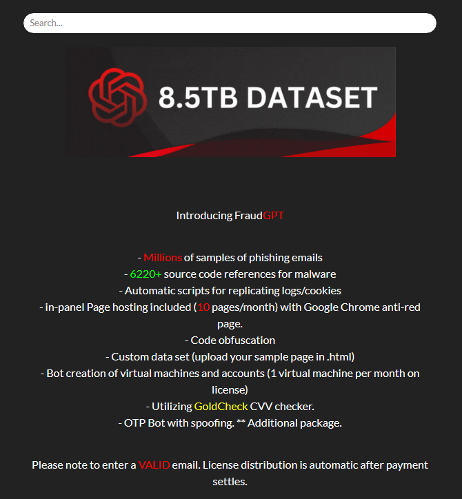

It's finally happening: Rather than just for productivity and research, threat actors are using LLMs to write malware. But companies need not worry just yet.

Infosecurity News

Proofpoint said this is the first time the threat actor has been seen using LLM-generated PowerShell scripts

The Record

Chinese state-backed hackers focused their cyberattacks on the South Pacific Islands, the South China Sea region and the US defense industrial base, according to a report from the Microsoft Threat Analysis Center (MTAC).

DarkReading

Our collection of the most relevant reporting and industry perspectives for those guiding cybersecurity strategies and focused on SecOps. Also included: Dealing with a Ramadan cyber spike; funding Internet security; and Microsoft's Azure AI changes.

The Hacker News

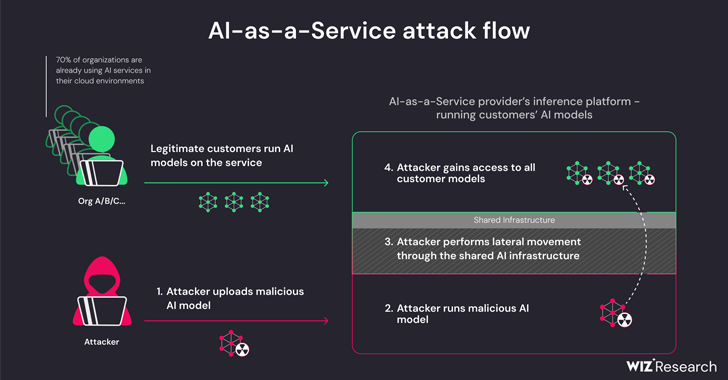

New research reveals critical security risks for AI-as-a-service providers like Hugging Face. Attackers could gain access to hijack models, escalate

Infosecurity News

A Microsoft report found that China-affiliated actors are publishing AI-generated content on social media to amplify controversial domestic issues in the US

Ars Technica

People are more like AI language models than you might think. Here are some prompting tips.

CyberNews

Apple researchers say that they’re working on large language models that can understand context, with one “substantially outperforming” GPT-4.

DarkReading

While some cybercriminals have bypassed guardrails to force legitimate AI models to turn bad, building their own platforms and making use of open source models are a greater threat.

The Cyber Express

By Mr. Zakir Hussain, CEO – BD Software Distribution As digital landscapes morph and expand, cybersecurity challenges intensify. The fusion

SecurityWeek

Generative-AI security startup SydeLabs emerges from stealth mode with $2.5 million in seed funding led by RTP Global.

DarkReading

The tendency of popular AI-based tools to recommend nonexistent code libraries offers a bigger opportunity than thought to distribute malicious packages.

SecurityWeek

Artificial intelligence computing giant NVIDIA patches two security bugs in ChatRTX for Windows, warning of risk of code execution attacks.

Ars Technica

Anthropic's Claude 3 is first to unseat GPT-4 since launch of Chatbot Arena in May '23.

CyberSecurity Dive

As the AI ecosystem grows and more tools connect to internal data, threat actors have a wider field to introduce vulnerabilities.

%20(2).webp)

Cyber Security News

Despite JavaScript's widespread use, writing secure code remains challenging, leading to web application vulnerabilities.

SecurityWeek

Two Bear Capital leads a venture capital bet on Dymium, a California startup building data protection technologies.

DarkReading

The UAE leads the Middle East in digital-transformation efforts, but slow patching and legacy technology continue to thwart its security posture.

Ars Technica

Sources say to expect OpenAI's next major AI model mid-2024, according to a new report.

The Hacker News

Generative AI is revolutionizing industries, but not without its challenges. A security breach could mean exposure of sensitive data.

CSO

Pig butchering, inheritance, and humanitarian relief scams jumped in 2023 aided by an AI-backed adversary toolset.

The Hacker News

Generative AI can modify malware source code to bypass string-based detection, significantly lowering the rates at which they're caught, according to

Ars Technica

With Apple's own AI tech lagging behind, the firm looks for a fallback solution.

SecurityWeek

SecurityWeek interviews Stephanie Carruthers, Chief People Hacker at X-Force Red, IBM Security, about social engineering.

Security Affairs

A new round of the weekly Security Affairs newsletter arrived! Every week the best security articles from Security Affairs are free for you.

Ars Technica

LLMs are trained to block harmful responses. Old-school images can override those rules.

Ars Technica

OpenAI's GPT-2 running locally in Microsoft Excel teaches the basics of how LLMs work.

The Hacker News

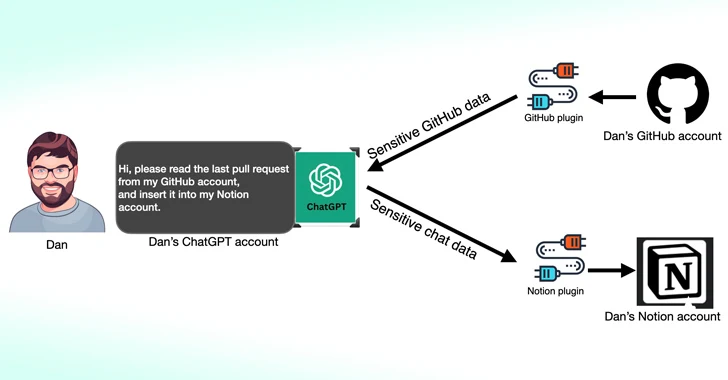

Cybersecurity experts have uncovered new vulnerabilities in #ChatGPT's third-party plugins, posing a significant risk to user data and account.

Ars Technica

All non-Google chat GPTs affected by side channel that leaks responses sent to users.

SecurityWeek

There are legitimate reasons for banning GenAI tools, but this should only be done after considering their data and security policies.

Cyber Security News

Researchers discovered multiple vulnerabilities in Google's Gemini Large Language Model (LLM) family, including Gemini Pro and Ultra, that allow attackers to manipulate the model's response through prompt injection.

DarkReading

Research is latest in a growing body of work to highlight troubling weaknesses in widely used generative AI tools.

The Hacker News

Google's Gemini large language model faces vulnerabilities that could lead to security breaches, including leaking system prompts & generating harmful

SC Magazine

Other flaws could leak ChatGPT conversations and third-party account details, researchers found.

Bleeping Computer

GitHub users accidentally exposed 12.8 million authentication and sensitive secrets in over 3 million public repositories during 2023, with the vast majority remaining valid after five days.

DarkReading

Like ChatGPT and other GenAI tools, Gemini is susceptible to attacks that can cause it to divulge system prompts, reveal sensitive information, and execute potentially malicious actions.

Infosecurity News

AI scientist Inma Martinez predicts governments will start requiring ‘frontier’ AI labs full disclosure on the purpose of the tools they are developing

Latest Hacking News

The privacy-focused browser Brave has now developed an exciting AI experience for Android users. As announced, Brave has launched ‘Leo’ as its very own AI assistant for Android browsers, hoping to enhance users’ experience. Brave AI

Ars Technica

"The open in OpenAI means that everyone should benefit from the fruits of AI after it's built."

HACKRead

Over 225,000 infostealer logs containing compromised ChatGPT credentials were detected between January-October 2023.

PCMag

Research suggests new AI security threats are on the horizon. Cloudflare is developing an AI Firewall and is using its own AI tools to defend against AI-powered cyberattacks.

The Hacker News

Over 100 AI/ML models discovered with malicious intent on the Hugging Face platform. The cyber realm faces a new threat.

CSO

Added to Cloudflare’s Web Application Firewall (WAF) offerings, Firewall for AI is designed to prevent the exploitation of AI models, specifically generative AI.

DarkReading

Convincing phishing emails, synthetic identities, and deepfakes all have been spotted in cyberattacks on the continent.

Bleeping Computer

Brave Software is the next company to jump into AI, announcing a new privacy-preserving AI assistant called "Leo" is rolling out on the Android version of its browser through the latest release, version 1.63.

DarkReading

The finding underscores the growing risk of weaponizing publicly available AI models and the need for better security to combat the looming threat.

SecurityWeek

AI's progress in 2024 and beyond: 2023 was a year of hype, 2024 brings the beginning of AI reality, and 2025 likely to be its delivery.

The Hacker News

Over 10 million secrets were exposed in public GitHub commits last year alone. Are your secrets safe? Learn how to protect your data in the age of AI.

DarkReading

Organizations boost cybersecurity budgets to tackle data-privacy and cloud-security threats amid speedy adoption of generative AI.

Infosecurity News

The OWASP Foundation provides new guidelines to deploy secure-by-design LLM use cases

Ars Technica

Gemma chatbots can run locally, and they reportedly outperform Meta's Llama 2.

CyberNews

Romance scams might be the most damaging of all, as victims are not only scammed of thousands of dollars but also have their romantic dreams crushed overnight.

Loading more articles....